In the rapidly evolving field of visual representation learning, the search for more efficient models that maintain or even exceed performance standards has been relentless. The recent paper on Vision Mamba introduces an exciting development with its new visual backbone, Vim, which leverages a state space model (SSM). By addressing key challenges in visual data processing, Vision Mamba offers a promising alternative to traditional Vision Transformers (ViTs). In this post, we’ll explore the core contributions of Vision Mamba and what this means for the future of visual learning.

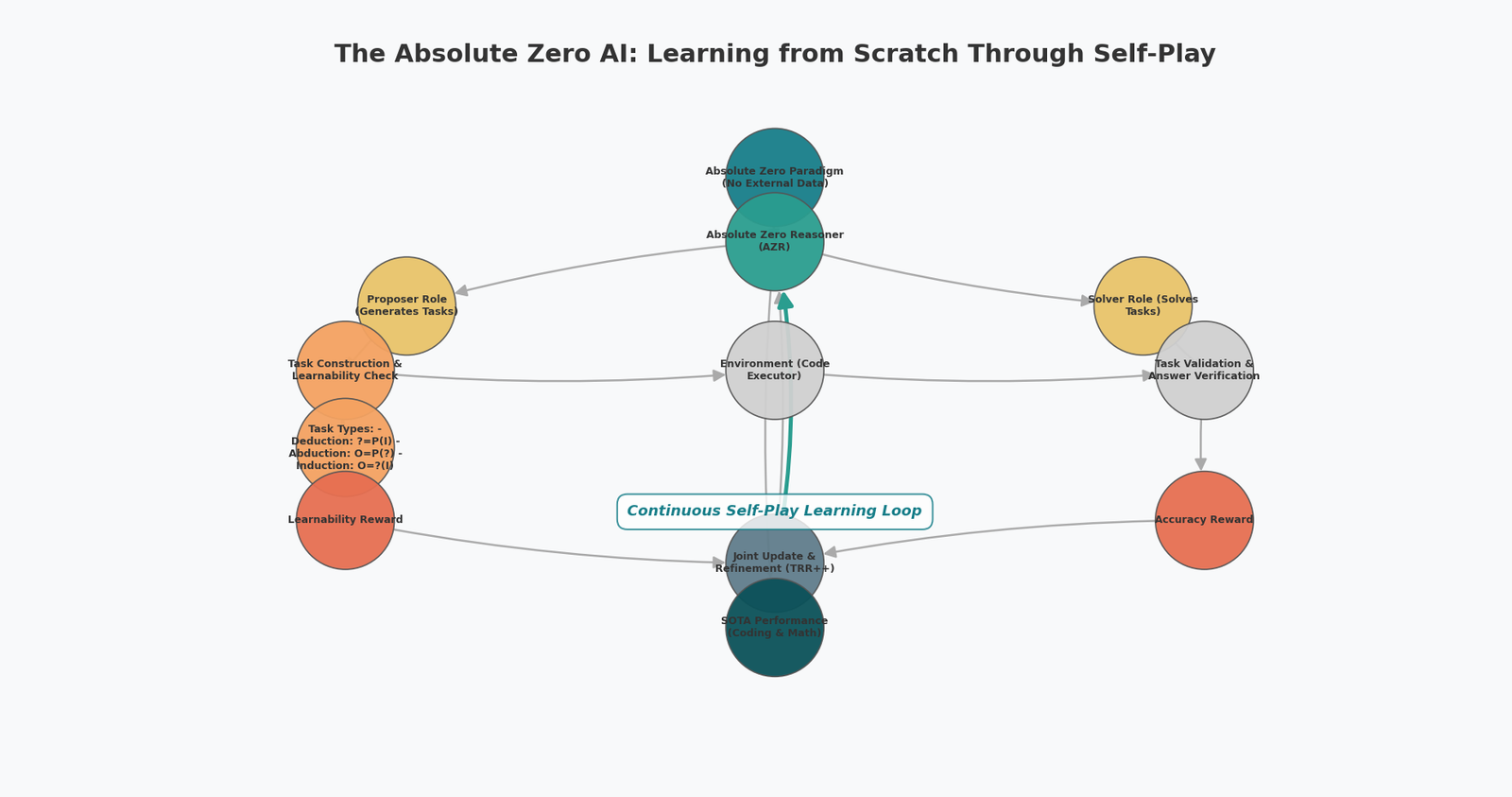

At its core, Vision Mamba is an architecture designed to address the efficiency and complexity of traditional vision transformers. Transformers rely heavily on self-attention mechanisms, which are effective but computationally expensive, especially for high-resolution image data. Vision Mamba proposes an alternative using bidirectional state space models, bypassing the need for attention altogether.

The Vim backbone model introduces two key innovations:

- Bidirectional SSMs: These provide a way to handle sequence data (image patches) more efficiently by compressing visual representations in both forward and backward directions. This eliminates some of the computational and memory burdens posed by traditional transformers, especially in high-resolution tasks.

- Position Embeddings: Unlike previous SSM-based approaches, Vim incorporates position embeddings, which are crucial for spatial awareness in visual data. This allows it to handle tasks like object detection and semantic segmentation with greater precision.

Architecture overview of Vision Mamba. (screenshots directly from paper)

Performance and Efficiency

One of the most striking claims from the paper is the efficiency gains Vim provides over Vision Transformers. For instance, the paper highlights that Vim is 2.8x faster and uses 86.8% less GPU memory compared to DeiT, a well-known transformer model, when handling high-resolution images (e.g., 1248×1248 pixels). This opens up opportunities for large-scale image processing in fields like medical imaging or remote sensing, where efficiency is critical.

Performance comparison between DeiT and Vim. (screenshots directly from paper)

Additionally, Vim has demonstrated superior accuracy in various visual tasks. On standard benchmarks like ImageNet classification, it surpasses DeiT in both speed and accuracy. For example, Vim-Tiny achieves a 76.1% top-1 accuracy, outperforming DeiT-Tiny by 3.9 points. When applied to object detection and segmentation tasks (like those on the COCO dataset), Vim also outperforms its transformer-based counterparts.

Why Is This Important?

The shift from transformers to state space models for vision tasks is significant because of the potential it holds for large-scale, high-resolution data processing. Transformers have become ubiquitous in machine learning, particularly in NLP and vision tasks. However, as models scale, their resource demands can become a bottleneck. By introducing a more memory- and compute-efficient architecture, Vision Mamba could help democratize access to advanced visual AI, making it feasible to deploy such models in resource-constrained environments.

Additionally, the linear scalability of Vim means it can process much longer sequences (or larger images) without suffering the quadratic complexity of transformers. This could be a game-changer in applications like gigapixel medical image analysis, which requires high resolution and long-range context.

What’s Next?

Vision Mamba offers an exciting alternative for visual representation learning, particularly in domains requiring high-resolution image processing. Its efficiency and performance gains over traditional transformer models make it a potential frontrunner in this space.

Future research could see Vim being applied to unsupervised learning tasks, such as masked image modeling, or multimodal tasks that combine vision and language, similar to CLIP-style models. The use of Vision Mamba in high-resolution downstream tasks like remote sensing and medical imaging could also be explored further, where its efficiency would be particularly advantageous.

Final Thoughts

Vision Mamba represents a step forward in efficient visual representation learning. By bypassing the need for attention mechanisms and incorporating the strengths of bidirectional state space models, it not only improves computational efficiency but also maintains, and often exceeds, the performance of transformer models. As the field continues to evolve, Vision Mamba could play a pivotal role in defining the next generation of visual backbones.

This article was based on the research paper: Vision Mamba: Efficient Visual Representation Learning with Bidirectional State Space Model.

Zhu, L., Liao, B., Zhang, Q., Wang, X., Liu, W., & Wang, X. (2024). Vision mamba: Efficient visual representation learning with bidirectional state space model. arXiv preprint arXiv:2401.09417.

Leave a Reply